Why might a pruned decision tree that does not fit the data so well be better than an unpruned one

Pruning is useful because classification trees may fit the training data well, but may do a poor job of classifying new values. A simpler tree often avoids over-fitting. As you can see, a pruned tree has less nodes and has less sparsity than a unpruned decision tree.

Why does pruning a tree improve accuracy?

Pruning reduces the complexity of the final classifier, and hence improves predictive accuracy by the reduction of overfitting. … A tree that is too large risks overfitting the training data and poorly generalizing to new samples. A small tree might not capture important structural information about the sample space.

What effect does Pruning a decision tree have on its bias?

It reduces the size of decision trees by removing sections of the tree that provide little power to classify instances.

Why is pruning necessary in decision tree?

Pruning a decision tree helps to prevent overfitting the training data so that our model generalizes well to unseen data. Pruning a decision tree means to remove a subtree that is redundant and not a useful split and replace it with a leaf node.How can you improve the accuracy of a decision tree?

- Add more data. Having more data is always a good idea. …

- Treat missing and Outlier values. …

- Feature Engineering. …

- Feature Selection. …

- Multiple algorithms. …

- Algorithm Tuning. …

- Ensemble methods.

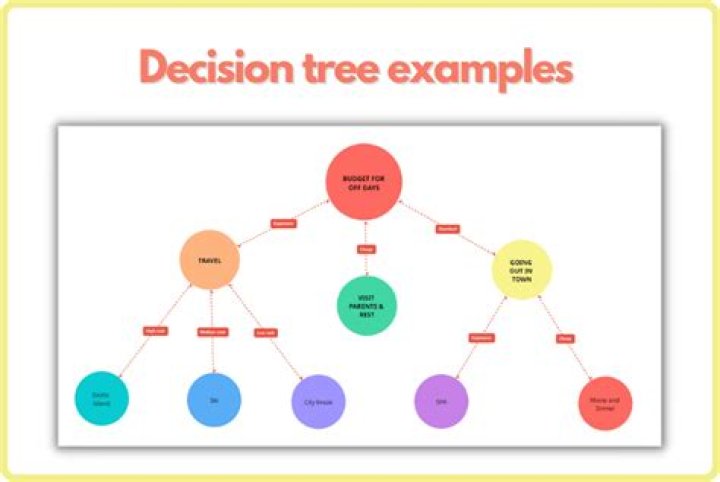

How does decision tree work?

Decision trees use multiple algorithms to decide to split a node into two or more sub-nodes. The creation of sub-nodes increases the homogeneity of resultant sub-nodes. … The decision tree splits the nodes on all available variables and then selects the split which results in most homogeneous sub-nodes.

What are the advantages and disadvantages of decision trees?

Advantages and Disadvantages of Decision Trees in Machine Learning. Decision Tree is used to solve both classification and regression problems. But the main drawback of Decision Tree is that it generally leads to overfitting of the data.

What is tree pruning?

What is the definition of tree pruning? Pruning is when you selectively remove branches from a tree. The goal is to remove unwanted branches, improve the tree’s structure, and direct new, healthy growth.What is pre-pruning in data mining?

Pre-pruning that stop growing the tree earlier, before it perfectly classifies the training set. Post-pruning that allows the tree to perfectly classify the training set, and then post prune the tree.

What is pruning in data mining?Pruning means to change the model by deleting the child nodes of a branch node. The pruned node is regarded as a leaf node. Leaf nodes cannot be pruned. A decision tree consists of a root node, several branch nodes, and several leaf nodes. The root node represents the top of the tree.

Article first time published onWhy do decision trees have low bias?

Decision Trees have extremely low bias because they maximally overfit to the training data. Each “prediction” it makes on the validation set would in essence be the fare of some taxi ride in our training data that ended up in the same final leaf node as the ride whose fare we are predicting.

What is bias in decision tree?

The bias of a model is a measure of how close our prediction is to the actual value on average from an average model. Note that bias is not a measure of a single model, it encapuslates the scenario in which we collect many datasets, create models for each dataset, and average the error over all of models.

Which of the following is a disadvantage of decision trees?

13. Which of the following is a disadvantage of decision trees? Explanation: Allowing a decision tree to split to a granular degree makes decision trees prone to learning every point extremely well to the point of perfect classification that is overfitting.

Does more data increase accuracy?

Too Much Data Having more data certainly increases the accuracy of your model, but there comes a stage where even adding infinite amounts of data cannot improve any more accuracy. This is what we called the natural noise of the data.

How can you improve accuracy?

- Inaccurate Data Sources. Companies should identify the right data sources, both internally and externally, to improve the quality of incoming data. …

- Set Data Quality Goals. …

- Avoid Overloading. …

- Review the Data. …

- Automate Error Reports. …

- Adopt Accuracy Standards. …

- Have a Good Work Environment.

How can you improve the accuracy of a measurement?

- Keep EVERYTHING Calibrated! …

- Conduct Routine Maintenance. …

- Operate in the Appropriate Range with Correct Parameters. …

- Understand Significant Figures (and Record Them Correctly!) …

- Take Multiple Measurements. …

- Detect Shifts Over Time. …

- Consider the “Human Factor”

What are advantages and disadvantages of decision tree in data mining?

A small change in the data can cause a large change in the structure of the decision tree causing instability. For a Decision tree sometimes calculation can go far more complex compared to other algorithms. Decision tree often involves higher time to train the model.

What is the advantage of decision tree?

A significant advantage of a decision tree is that it forces the consideration of all possible outcomes of a decision and traces each path to a conclusion. It creates a comprehensive analysis of the consequences along each branch and identifies decision nodes that need further analysis.

What are the benefits of decision tree?

- Clearly lay out the problem so that all options can be challenged.

- Allow us to analyze fully the possible consequences of a decision.

- Provide a framework to quantify the values of outcomes and the probabilities of achieving them.

What is decision tree in data science?

A decision tree is a type of supervised machine learning used to categorize or make predictions based on how a previous set of questions were answered. … Decision trees imitate human thinking, so it’s generally easy for data scientists to understand and interpret the results.

How do you prune a decision tree?

Post-pruning is also known as backward pruning. In this, first generate the decision tree and then remove non-significant branches. Post-pruning a decision tree implies that we begin by generating the (complete) tree and then adjust it with the aim of improving the accuracy on unseen instances.

Why is tree pruning useful in tree induction method?

When decision trees are built, many of the branches may reflect noise or outliers in the training data. Tree pruning methods address this problem of overfittingthe data. Tree pruning attempts to identify and remove such branches, with the goal of improving classification accuracy on unseen data.

What is pruning in decision tree medium?

What is pruning ? In general pruning is a process of removal of selected part of plant such as bud,branches and roots . In Decision Tree pruning does the same task it removes the branchesof decision tree to overcome the overfitting condition of decision tree.

What is the advantage of pruning?

Pruning removes dead and dying branches and stubs, allowing room for new growth and protecting your property and passerby from damage. It also deters pest and animal infestation and promotes the plant’s natural shape and healthy growth.

When should trees be pruned?

Generally, the best time to prune or trim trees and shrubs is during the winter months. From November through March, most trees are dormant which makes it the ideal time for the following reasons: Trees are less susceptible to insects or disease.

What is difference between trimming and pruning?

Pruning is used to remove unnecessary branches. Trimming, on the other hand, promotes healthy growth. Both services are performed at separate times of the year, using vastly different pieces of equipment, to provide a better aesthetic and healthier landscape.

Why is tree pruning useful in decision tree induction What is a drawback of using a separate set of tuples to evaluate pruning?

If the separate set of tuples are skewed, then using them to evaluate the pruned tree would not be a good indicator of the pruned tree’s classification accuracy. Furthermore, using a separate set of tuples to evaluate pruning means there are less tuples to use for creation and testing of the tree.

How are decision trees useful in data mining?

Decision Tree is used to build classification and regression models. It is used to create data models that will predict class labels or values for the decision-making process. The models are built from the training dataset fed to the system (supervised learning).

How does pruning promote growth?

Pruning stimulates growth closest to the cut in vertical shoots; farther away from cuts in limbs 45° to 60° from vertical. Pruning generally stimulates regrowth near the cut (Fig. 6). Vigorous shoot growth will usually occur within 6 to 8 inches of the pruning cut.

How can a decision tree generate high variance and low bias?

The tree becomes highly tuned to the data present in the training set. But when a new data point is fed, even if one of the parameters deviates slightly, the condition will not be met and it will take the wrong branch. A complicated decision tree (e.g. deep) has low bias and high variance.

Why do decision trees have high variance?

A decision tree has high variance because, if you imagine a very large tree, it can basically adjust its predictions to every single input. THEN team A wins. If the tree is very deep, it will get very specific and you may only have one such game in your training data.